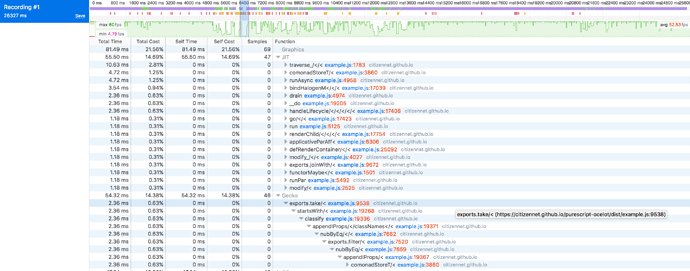

Update: it turns out the root of the problem was switching to code points instead of code units for string functions, coupled with a terribly inefficient function that was causing major VDOM redraws. Lessons:

- browser profiling is your friend

- use code points for correctness, but know they are extremely slow, so use code units for performance as needed

We have a large Halogen application in production. Most of the pages involved are pretty simple, but we have a few large, complex forms. The forms have a few dozen fields and some of them are fairly complex typeaheads, calendar pickers, and so on, while most of them are text fields.

Usually these large forms render out quickly and register updates instantly. However, we’ve just migrated the application to 0.12 and that’s no longer true. The input fields have become laggy, the typeaheads take a while to respond and open, even animations and cursors are sluggish.

This has been a surprise because we changed no logic in the transition – we just changed imports around, deleted effect rows, and so on. The only places where functions changed was in using liftEffect or identity instead of their previous names.

Has anyone else experienced something like this after migrating a Halogen application to 0.12?

Troubleshooting

We’ve taken a few steps to check what’s going on.

- We verified that this code pre-0.12 worked properly (no lagginess)

- We verified we haven’t changed the underlying logic, just changed types and a few function names

- We verified that other, smaller pages do not have this same problem

- We verified that the form speeds up more and more the more fields we delete from it, until with a handful of fields it is snappy and responsive again

Possibilities

I can’t see how our code changes would have slowed this form down so dramatically, so I’ve turned to look at our dependencies to see what’s changed there. Perhaps we wrote horribly inefficient code in the first place and that problem is only being revealed now, or perhaps a dependency has become inefficient when it wasn’t before, but either way if the problem isn’t in our code then we ought to turn to dependencies and see if something’s happened there.

Things that come to mind:

- Perhaps something that was previously stack-safe is now gobbling up memory in our code?

- Perhaps something has changed in the VDOM implementation under the hood?

- Perhaps some dependency of Halogen has become less efficient?

I haven’t yet had the time to do it, but I’d like to play around with a 0.12 Halogen project with some large pages and see if the issue is specific to our code base or if it affects all Halogen projects.

Related Questions

This is the first time I’ve needed to debug performance problems in a PureScript application and more specifically a Halogen application. I’m actually not so sure where to start analyzing to try and find a root cause.

Does anyone have pointers for drilling down into performance problems to start identifying some possible solutions?

Dependencies

"purescript-prelude": "^4.0.1",

"purescript-console": "^4.1.0",

"purescript-halogen": "^4.0.0",

"purescript-affjax": "^6.0.0",

"purescript-datetime": "^4.0.0",

"purescript-argonaut": "^4.0.1",

"purescript-formatters": "^4.0.0",

"purescript-generics-rep": "^6.0.0",

"purescript-newtype": "^3.0.0",

"purescript-css": "^4.0.0",

"purescript-remotedata": "^4.0.0",

"purescript-parallel": "^4.0.0",

"purescript-routing": "^8.0.0",

"purescript-read": "^1.0.1",

"purescript-record": "^1.0.0",

"purescript-profunctor-lenses": "^4.0.0",

"purescript-behaviors": "^7.0.0",

"purescript-email-validate": "^3.0.0",

"purescript-bigints": "^4.0.0",

"purescript-numbers": "^6.0.0",

"purescript-halogen-css": "^8.0.0"